Azwad Sabik, Rodolfo Finocchi

April 2016 - May 2016

Implemented a ROS package enabling autonomous navigation and landing of quadrotor at target location within drone cage fitted with grid of AR tags. Performed sensor fusion between readings from onboard cameras, IMU, and ultrasonic rangefinder (altimter) to achieve odometry and state estimation. Utilized ROS packages for efficient kinematic transformations and AR tag detection; developed system in Gazebo simulation prior to testing.

Azwad Sabik

May 2016

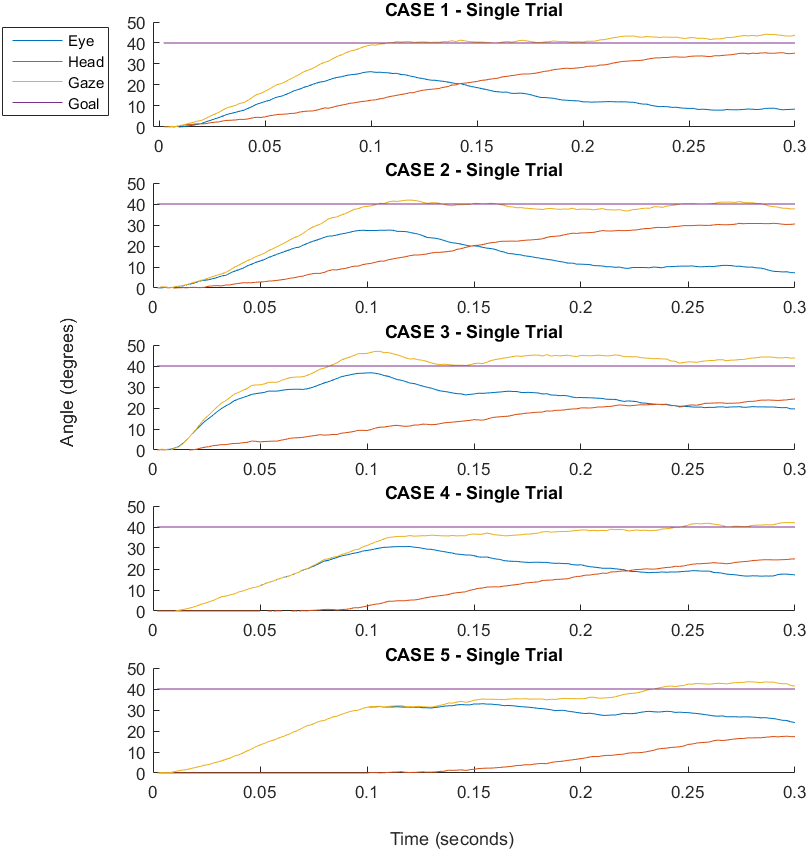

Simulated human gaze as coordinated motion of eyes and head in one dimension driven by optimal-feedback control. Performed motion planning through iterative computation of optimal inputs (as gains via Bellman equation) and state estimates (as Kalman gains).

Azwad Sabik, Rohit Bhattacharya

May 2016

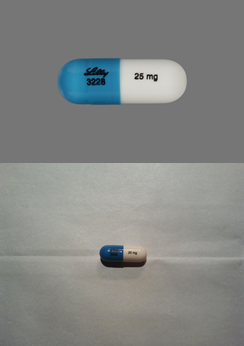

Fine-tuned and validated modified version of AlexNet in Caffe framework to perform 1000-class classification of pill images, achieving 56.1% test accuracy. Utilized a dataset of 2000 'reference' quality images plus 5000 'consumer' quality images and performed fine-tuning on multi-GPU system. Project idea drawn from NIH Pill Recognition challenge.

Azwad Sabik

December 2015

Developed system to reproduce GUI-input line-art on whiteboard using joint level control of UR5 robot arm. Two modes of operation were developed: one utilizing inverse kinematics to map workspace positions to joint-space states, and another utilizing differential kinematics to map workspace errors to joint-space velocities. System was developed in MATLAB.

Azwad Sabik, Rohit Bhattacharya

October 2015 - December 2015

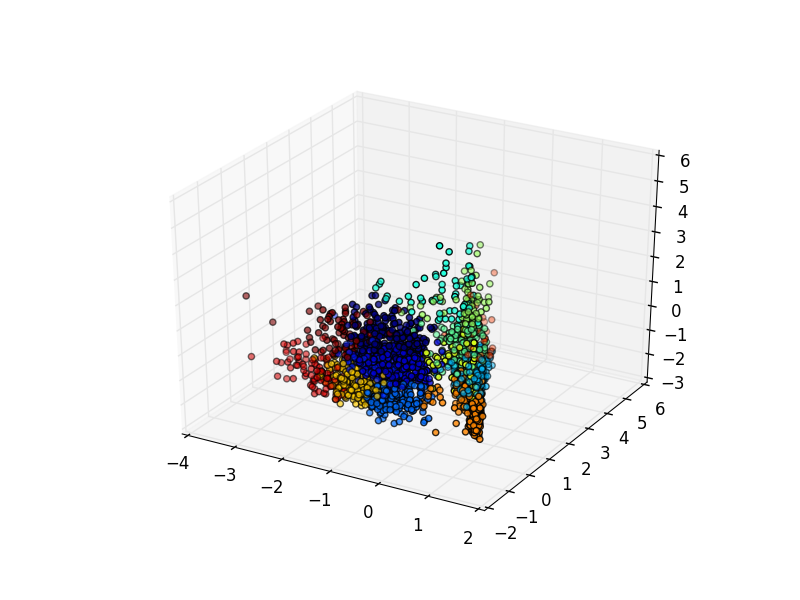

Developed pipeline to generate user-specific recommendations for character selection and gameplay behaviours for distinct temporal segments of MOBA matches. Utilized techniques of unsupervised learning (automatic feature extraction and dimensionality reduction) to allow effective identification of distinct playstyle clusters in professional League of Legends (LoL) matches. Principal components analysis (PCA), kernel PCA, locally linear embeddings, and clustering via affinity propagation were explored for the purpose of learning playstyles. Partial least squares regression was utilized to generate recommendations.

Azwad Sabik, Rohit Bhattacharya, Yvonne Jiang

January 2015 - May 2015

Explored feasibility of assessing bloodflow from smartphone-quality video as a low-cost alternative to laser doppler imaging for chronic wound patients. Developed, trained, and refined support vector machine classifiers utilizing handcrafted features derived through eulerian analysis/spatiotemporal processing and identified as potentially relevant to bloodflow. Evaluated potential for Eulerian Video Magnification to improve classification results. Won award for best project in course competition.

Azwad Sabik, Edward Bryner, Sakina Girnary, Ari Messenger

February 2015 - May 2015

Built 3-DOF autonomous vehicle to perform scavenging and delivery task while adhering to competition's vehicle cost and size constraints. Implemented controllers for embedded sensing (CMUcam5, IMU) and actuation (DC/servo motors) as well as high-level control by Arduino Mega. Won first place in course competition having developed vehicle most efficiently able to perform task.

Azwad Sabik, Rohit Bhattacharya, Haley Huang

March 2015 - May 2015

The goal of this project was functional characterization of disease-associated risk SNPs. Researched features of SNPs (single nucleotide polymorphisms) statistically associated with Crohn's Disease, Type I Diabetes, and Type II Diabetes. Focused on features potentially associating SNP with four particular functional changes: alteration in protein activity and stability; change in gene expression level; altered splicing; altered microRNA binding. Utilized UCSC genome browser and other on-line genetic databases to study features of risk SNPs. Developed knowledge-based classifier for predicting functional change given SNP, incorporating automatic lookup of the SNP's feature values.

Azwad Sabik, Hansin Kim, Can Zhao

November 2014 - December 2014

Developed 5-DOF controller utilizing head- and leg-motion input. Implemented utilizing accelerometer and magnetometer data collected via Arduino and communicated to computer using Python-based serial communication library; data was subsequently processed and interpreted on computer as mouse-and-keyboard input used for control of character in Minecraft.